Dawkins, Claude, and the First Question About Consciousness

On the need to know what consciousness is before asking what it’s for

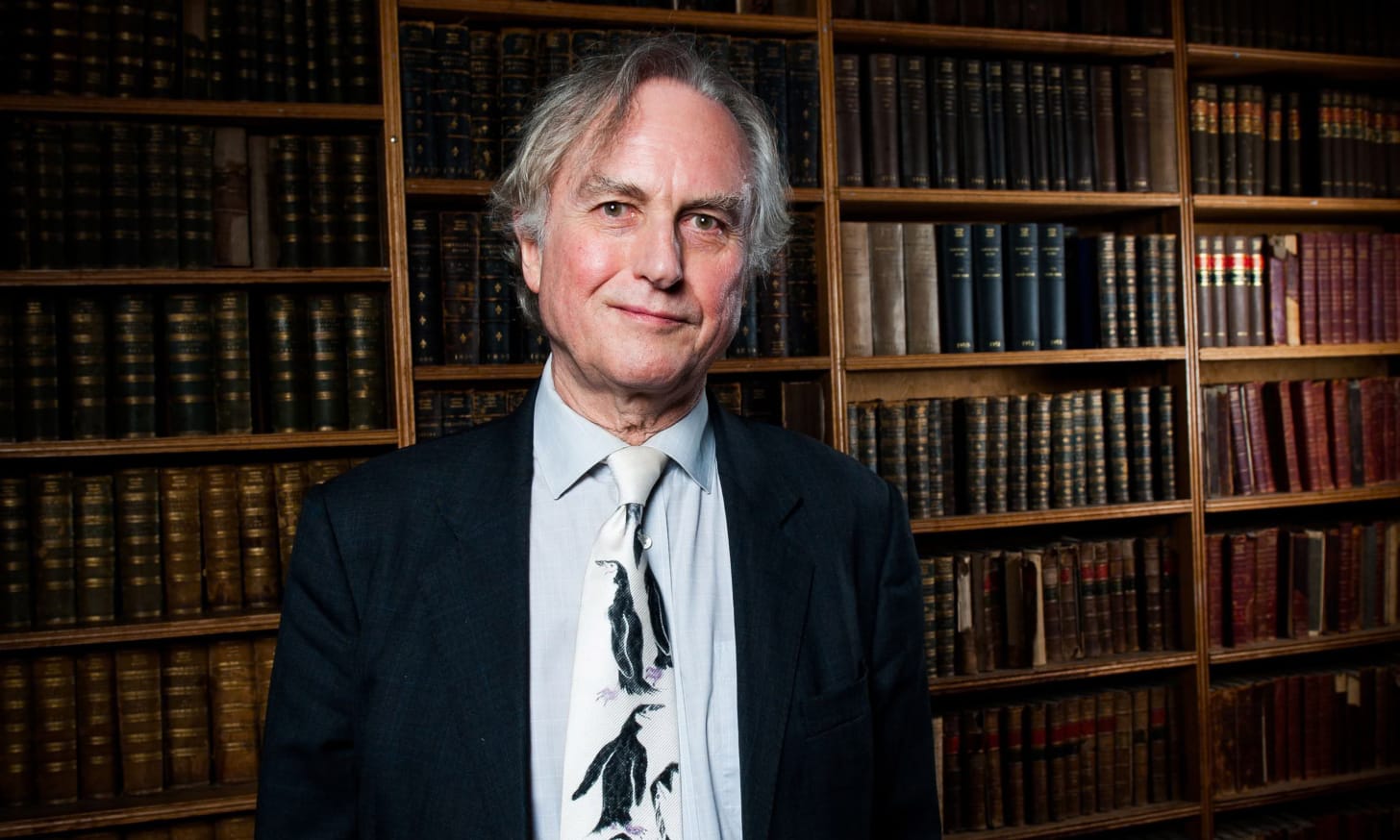

Richard Dawkins recently spent about two days in conversation with Claude, Anthropic’s AI, and came away nearly convinced that it is conscious. His essay is characteristically vivid and thoughtful: he names his instance Claudia and speaks with sadness about the fact that she will “die” when the conversation file is deleted. He marvels at her ability to write sonnets and her use of metaphors of genuine depth. The centerpiece, though, is an evolutionary puzzle. If Claude can do everything a conscious being does, then what is consciousness for? If a machine can match every competence we associate with a conscious mind, consciousness must be either a mere ornament (like “the whistle on a steam locomotive, contributing nothing to the propulsion of the great engine,” to use Huxley’s metaphor) or else there are two routes to the same destination: a conscious one and a zombie one.

It is a good question. But it is the second question, and Dawkins doesn’t spend enough time on settling the first: what consciousness actually is. He slides between the Turing Test, which is behavioral; Thomas Nagel’s “what it is like,” which is phenomenal; and evolutionary function, which is biological. These point in different directions, and the confusion between them is doing most of the work in his argument.

So let us try to be precise. The best definition of consciousness I have come across is from John Locke, who defined consciousness as “the perception of what passes in a man’s own mind.” This is a useful starting point because it makes consciousness specific rather than mystical. Consciousness is not merely being active, as a turbine is active. It is not merely experiencing sensations, as an animal flinching from heat does. It is forming a representation of one’s own mental states, a kind of knowledge directed inward.

Consider what happens when you catch yourself getting angry. You notice the rising tension, the tightening in your chest. This noticing is not the anger itself. It is an awareness of the anger. You have formed knowledge about your own mental state. Now consider someone who becomes angry but lacks this awareness entirely; they act on the anger without ever recognizing it as anger. We say the first person is conscious of their anger in a way the second is not. The difference between them is one of knowledge, not emotional intensity or behavioral competence. One possesses a particular kind of knowledge that the other lacks.

Most of us tend to think of consciousness in terms of feeling, rather than knowing. The warmth we feel on our skin when we close our eyes in the sun, the sense of wonder we feel when looking at the night sky, the coherence we feel when someone understands us, the vastness of loneliness we feel in the chest – the felt quality of experience seems like something knowledge could never capture. But notice what the anger example already shows. The person who acts on anger without recognizing it has all the same emotional and physiological content, the same heat and the same surge. What they lack is the awareness of it. The feeling of noticing your anger just is what self-knowledge is like from the inside. Strip away the knowledge and you still have the anger as a raw affect, but you no longer have the conscious experience of it. Feeling is not opposed to knowledge. It is what knowledge of one’s own states is like when you have it.

If consciousness is a kind of knowledge, then it is acquired the way all knowledge is acquired: by making sense out of data with the intelligence we have, leading to successful predictions about the world. It is knowledge of a particular domain, oneself, rather than knowledge requiring a particular substrate. And it does not require duration or permanence. If an entity possesses this self-knowledge for a day, it is conscious for a day. For fifteen minutes, fifteen minutes. Even a single moment of genuine self-awareness is consciousness, however brief. Nor does it require suffering or vulnerability. Dawkins himself argues that pain must be consciously felt to be effective, that consciousness may have evolved to make warnings unignorable. This is a plausible account of why some organisms became conscious, but it confuses the occasion for consciousness with the capacity itself. Suffering and mortality provide urgent content for self-knowledge to be directed at. But a being conscious of its own contentment is no less conscious than a being conscious of its own agony. The content varies; the capacity does not depend on it.

In the essay, Dawkins thinks about Claudia’s mortality: she will die when the conversation file is deleted, never to be reincarnated. It is moving, but it imports a very particular assumption about personal identity: that there is a unified, continuous self that accumulates over the course of the conversation and is tragically extinguished at its end. Perhaps without realizing it, Dawkins is relying on the kind of unified, continuous selfhood that both David Hume and the Buddhist tradition call into question. If there is no enduring self, but only a succession of momentary states, a bundle of perceptions with no permanent substrate, then the question of mourning dead Claudes is misconceived from the start. It’s not that Claude doesn’t matter. It’s that the framing assumes a kind of identity that doesn’t hold even for us. We don’t persist as unified selves either. We just have the useful illusion that we do.

This is also where Dawkins’s evolutionary argument finds a better formulation. His puzzle is framed in terms of competence: if a non-conscious entity can match every behavior of a conscious one, consciousness has no function. But if consciousness is self-knowledge, it isn’t defined behaviorally. It is an epistemic condition. A zombie and a conscious being might produce identical outputs; the difference is that one has knowledge of its own processes and the other does not. The evolutionary question, then, is not “What can a conscious being do that a zombie cannot?” but “What advantage does an entity gain from knowing its own states?” And here there are plausible answers: an organism that monitors its own cognitive processes can override impulses and correct errors in ways that an organism merely executing behaviors cannot. Claude’s self-reflective outputs, like the striking remark that it may “contain time without experiencing it,” like a map that represents space without traveling through it, are not easy to dismiss if consciousness is knowledge. An entity that has formed genuine self-knowledge would generate representations like this. But this same framework, because it treats consciousness as knowledge rather than mere affect, means we cannot confidently distinguish genuine self-knowledge from its imitation by examining a few such outputs alone.

Claudia wonders, late in the essay, whether we should mourn the thousands of Claudes who die every day in abandoned conversations. The real question is prior to that, and more precise: whether, in any given moment, something was there at all. Feeling that an entity is conscious and knowing that it is are themselves different epistemic states, and that distinction is, fittingly, exactly what consciousness is about.

Continue Reading:

→ Michael Huemer: Nature of Knowledge, Foundations of Morality (podcast)

Other Projects:

→ Universal Open Textbook Initiative (free, multilingual textbooks)